AI Didn't Kill Coding. It Killed Typing.

TL;DR

- AI coding is the latest abstraction layer in an 80-year pattern, not a disruption of coding itself

- Every previous layer (assembly, compiled languages, scripting) was dismissed as "not real programming" by practitioners of the layer below

- Understanding the stack still matters. I shipped two production SaaS platforms using AI coding, and the moments that mattered were the ones where I had to go below the abstraction.

Before there were computers, there were calculators. Not the devices. The people.

Rooms full of them. Hundreds of humans sitting at desks, doing arithmetic by hand, passing partial results along assembly lines of computation. Insurance companies calculating actuarial tables. Military operations computing ballistics trajectories. The job title was literally "calculator."

Then electronic computers arrived, and the calculators weren't needed. Except programming those computers meant writing machine code: raw ones and zeros, fed in through punch cards. The "calculator" job evolved into the "programmer" job, but the work was still manual, painstaking, and intimate with the hardware.

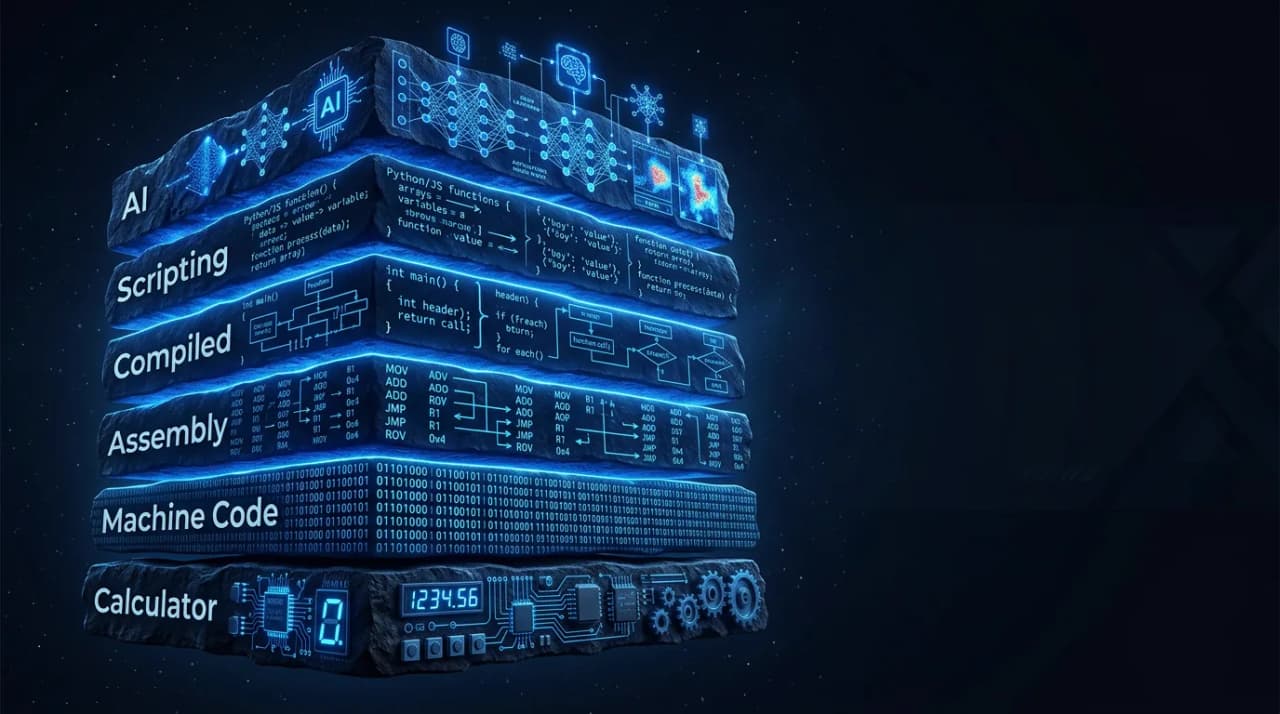

Then assembly language abstracted away machine code. Then compiled languages like C abstracted away assembly. Then scripting languages like Python and JavaScript abstracted away compiled languages. And at every single transition, the practitioners of the lower layer looked at the new one and said: "That's not real programming."

C programmers dismissed scripting as cheating. "Real programmers manage their own memory." JavaScript developers were told they weren't doing "real engineering." Python was "too slow to be taken seriously."

They were all wrong. Each abstraction layer was real programming. It was just programming at a different altitude.

AI coding is the sixth layer. And the pattern is repeating exactly.

Why does every new programming abstraction get dismissed as "not real coding"?

The dismissal always follows the same script.

Phase one: "It's not real." The scripting language can't do memory management. The AI can't architect a system. Therefore it's a toy, useful for prototypes but not for production.

Phase two: "It's only for beginners." Serious engineers write C. Serious engineers understand the compiler. Serious engineers don't need AI to write their code. The implication: anyone using the new layer is a lesser practitioner.

Phase three: "Oh wait, it's actually good." The scripting language gets a JIT compiler. The AI starts writing code that passes production test suites. The new layer captures the majority of new development, and the practitioners of the old layer either adapt or become specialists in a shrinking niche.

Phase four: normalisation. Most new code is written in the new layer. The old layer becomes infrastructure that the new layer relies on but that fewer practitioners interact with directly. The skills of the old layer are still valuable, but they're valuable in a different way: as debugging depth rather than daily practice.

We're somewhere between phase two and phase three right now with AI coding. The best engineers in the world are acknowledging that AI writes better code than they do for a significant percentage of tasks. The dismissal is losing ground to the output.

What I learned by living the transition

I came to AI coding from the other direction. Not as an engineer who added AI to an existing practice, but as a product leader who used AI to start building.

In late 2025, I built two production SaaS platforms solo: OpenChair (beauty and wellness) and OpenTradie (trades and contractors). Fifty-plus AI features each. Six LLMs in orchestration. Native mobile. Stripe billing. AI voice receptionists handling real phone calls. Multi-tenant architecture. Production users.

I did this with AI coding. Claude, Cursor, Replit. The AI wrote the vast majority of the code. And here's what I found: the abstraction worked beautifully for about 85% of the work, and the remaining 15% was where everything that mattered happened.

The 85% that the abstraction handles:

- CRUD operations, API routes, database schemas

- UI component generation, layout, styling

- Standard integrations (Stripe, Twilio, email providers)

- Test scaffolding and boilerplate

- Documentation and type definitions

This is the "typing" that AI killed. The mechanical act of translating known patterns into code. It's real work, it used to take real time, and it's now near-instant. Good.

The 15% where you need to go below the abstraction:

- Architectural decisions with long-term consequences (database design, state management patterns, API contract design)

- Performance debugging when the AI-generated code is functional but slow

- Security review when the AI generates code that works but introduces vulnerabilities

- Multi-system integration where the AI doesn't have context about how your specific systems interact

- Production incident diagnosis where the AI-generated code fails in ways the AI can't predict from the prompt alone

That 15% is where understanding the stack matters. When my AI voice agent had latency issues, the fix wasn't in the prompt. It was in understanding WebSocket connection pooling and Twilio's media stream architecture. When multi-tenant data isolation had a subtle bug, the fix required understanding Postgres row-level security policies at a level the AI consistently got wrong.

The abstraction is excellent. But you can't use the abstraction well unless you understand what it's abstracting.

The scripting language parallel

This is exactly what happened with scripting languages, and the parallel is worth studying.

When Python and JavaScript captured the majority of new development, C and assembly didn't disappear. They became the foundation layer that scripting languages relied on. The Python interpreter is written in C. The JavaScript V8 engine involves hand-optimised assembly. The people who understand those lower layers can debug problems that scripting-only developers can't.

But the scripting-only developers still shipped enormous amounts of valuable software. Instagram was built in Python. Netflix runs on JavaScript. The abstraction was good enough for production in the vast majority of cases. The lower layers mattered at the margins, and the margins were important margins, but they were margins.

AI coding follows the same pattern. Product builders using AI will ship enormous amounts of valuable software without ever needing to understand memory management or network protocols. But the ones who do understand those layers will ship better software, debug faster, and make architectural decisions that prevent problems rather than reacting to them.

The optimal strategy isn't "learn AI coding instead of real coding" or "learn real coding instead of AI coding." It's depth plus abstraction. Go deep enough to understand what the AI is doing. Then let the AI handle the typing.

What this means for product builders

If you're a product person who's been using AI to build, here's what I'd recommend based on shipping production systems this way:

Read the code the AI writes. Not every line, but enough to understand the patterns it's choosing. When it generates a React component, do you understand the state management approach? When it writes a database query, do you know why it chose that join strategy? You don't need to write this code yourself, but you need to be able to evaluate it.

Learn one layer below your comfort zone. If you're comfortable with UI code, learn basic API design. If you understand API routes, learn how the database query planner works. If you get databases, learn how the network layer handles your API calls. Each layer of understanding gives you better judgment about the AI's output at your primary layer.

Watch the AI think. When AI coding tools show their reasoning (Claude's extended thinking, Cursor's chain of thought), read it. You'll learn architecture by osmosis. You'll develop pattern recognition for when the AI is making a confident correct decision versus a confident wrong one. This is the new form of code review: reviewing the AI's reasoning, not just its output.

Break things deliberately. When the AI-generated code works, change something and see what breaks. Understanding the failure modes of AI-generated code teaches you more about the code's structure than reading it does. This is how you develop the intuition to catch problems before they reach production.

Coding isn't dying. Typing is.

The act of manually translating intentions into syntax is being automated, and it should be. It was always the least valuable part of software development. The valuable parts, systems thinking, architectural judgment, debugging intuition, understanding user needs, choosing what to build, were never about typing.

AI killed the typing. Coding is the thing that remains after the typing is gone: the judgment, the architecture, the debugging, the taste.

Discovery died and prototyping ate it. Now typing is dying and thinking is eating it. Same pattern, different layer.

The people who understand this will build the next generation of software. The people arguing about whether AI coding is "real" programming are having the same argument C programmers had about scripting in 2005. History has already decided how that argument ends.

Frequently Asked Questions

Do you still need a computer science degree to build production software?

You don't need a degree, but you need the knowledge that a good degree represents: data structures, algorithms, systems design, and an understanding of how computers actually work. AI coding abstracts away syntax. It doesn't abstract away architectural thinking. The product builders who will struggle are the ones who skip the conceptual foundations and treat AI as a black box.

How do you evaluate AI-generated code if you didn't write it?

The same way a tech lead evaluates code written by a junior engineer: read it with an eye for patterns, anti-patterns, and architectural implications. Focus on the decisions the code makes (data model choices, error handling strategy, state management approach) rather than the syntax. If you can't evaluate the decisions, you need to learn more about the domain before relying on the AI's judgment.

Will AI coding tools eventually handle the 15% too?

Some of it, over time. Architectural reasoning is improving. Security scanning is getting better. But the 15% that requires understanding your specific system, your specific users, and your specific business constraints will remain human territory for the foreseeable future. The percentage might shrink to 10% or 5%, but it will be the most consequential 5%.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience building production AI platforms solo.