Two Production SaaS Platforms, One Builder: Solo Vertical SaaS

TL;DR

- I built a production vertical SaaS platform for beauty and wellness (OpenChair) as a solo operator: 50+ AI features, native mobile apps, Stripe billing, AI voice receptionist, launching this week

- To validate the approach wasn't a one-off, I started building a second platform for a completely different vertical (OpenTradie for trades and contractors), and the core architecture transferred cleanly

- The economics have inverted: what once required a 15-person team and $2M seed round now requires one AI-augmented builder and cloud infrastructure costs

I keep hearing the same objection from enterprise product leaders: "Sure, AI helps you prototype faster. But production software? With billing, multi-tenancy, compliance, mobile apps? That still requires a team."

It doesn't. I know because I built it.

OpenChair is a production SaaS platform for beauty and wellness venues. It launches this week. 50+ AI features. Native mobile apps on the App Store and Play Store. Stripe billing with Connect and Terminal. Multi-tenant architecture with 68+ row-level security policies. AI voice receptionist handling real phone calls. 180+ database migrations. 130+ screens across web and mobile. 12+ third-party integrations.

I built it alone.

Not as a prototype. Not as an MVP with a landing page and a waitlist. As production software with real billing, real data isolation, real App Store deployment. The kind of platform that, three years ago, would have required a full-stack engineering team, a designer, a DevOps person, and a QA lead.

And then, to test whether this was repeatable or just a one-time effort, I started building OpenTradie: an AI-first operating system for trade businesses. Different vertical. Different operational patterns. Same approach. The core architecture transferred. The domain-specific features (crew dispatch, job costing, quote generation) were net new, but the chassis held.

The thesis isn't "AI makes development faster." That's obvious and boring. The thesis is that AI has fundamentally changed the economics of vertical SaaS, and most people haven't processed what that means.

How have the economics of building vertical SaaS changed?

Building a vertical SaaS product used to follow a predictable formula. Raise $1.5M to $3M. Hire 8 to 15 people. Spend 12 to 18 months building v1. Pray for product-market fit before the money runs out.

The cost structure was dominated by labour. Engineers, designers, QA, DevOps. Salaries, benefits, management overhead. A senior full-stack engineer in Sydney runs $180K+ fully loaded. Multiply by the team size and the timeline, and you're burning $80K to $150K per month before a single customer pays you anything.

Now run the same calculation with AI-augmented solo development. Cloud infrastructure: ~$200/month. AI inference via OpenRouter with prompt caching: negligible at pre-scale volumes (90% cost reduction through caching). App Store developer accounts: $100/year each. Stripe fees: percentage of revenue, so zero until you earn.

The monthly burn for a production vertical SaaS platform is under $500. Not $50,000. Not $150,000. Under $500.

That changes who can build software, what software gets built, and which markets are worth targeting. Verticals that were "too small" for a funded startup (beauty salons, trade contractors, local services) suddenly make economic sense when the build cost drops by two orders of magnitude.

The vertical SaaS chassis

The first build is the hardest. You're solving infrastructure problems (multi-tenancy, billing, AI orchestration, mobile deployment) and domain problems (scheduling, client management, service catalogues) simultaneously. Every decision is a first decision.

The second build reveals what's reusable.

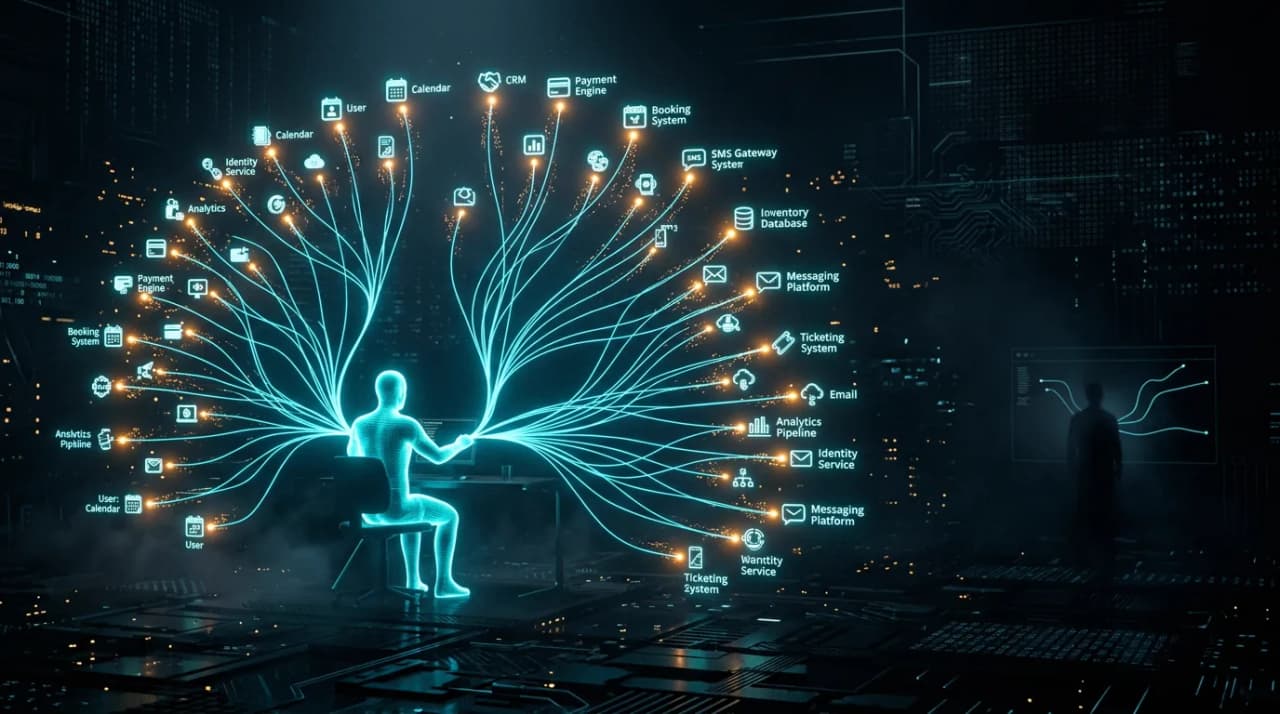

OpenChair's infrastructure stack transferred cleanly to OpenTradie. tRPC with end-to-end type safety across 63+ routers. Supabase with aggressive row-level security for multi-tenant isolation. OpenRouter for multi-model orchestration across six LLMs (Claude Sonnet, Claude Haiku, GPT-4o Mini, Gemini). Stripe for billing, Connect for marketplace payments, Terminal for point-of-sale. The Vercel AI SDK for streaming responses.

This is the reusable vertical SaaS chassis. Authentication, multi-tenancy, billing, AI orchestration, real-time sync, mobile deployment pipeline. Once you've built it once, a new vertical is roughly 30% to 40% chassis reuse and 60% to 70% domain-specific features.

That 60% to 70% is where the product decisions live. For OpenChair: appointment scheduling with overlapping bookings, client history with preference tracking, product inventory, and vision-based style recommendations. For OpenTradie: crew dispatch with travel time calculation, job costing, quote generation, and equipment tracking. Same AI infrastructure, completely different product logic.

Beauty and wellness is appointment-based, individual-client focused, preference-driven. Trades are job-based, crew-dispatched, multi-site, urgency-driven. The operational patterns couldn't be more different. The fact that the same chassis supports both is what makes the model repeatable.

The AI voice receptionist: where vertical depth matters

OpenChair includes an AI voice receptionist built on Retell AI and Twilio. It answers real phone calls, qualifies callers, books appointments, and hands off to a human when it can't handle the request.

Building a voice agent that works in a demo takes a weekend. Building one that works in production takes weeks of conversation flow design, latency management, and failure mode handling. The caller doesn't know they're talking to an AI, and the experience has to hold up when they mumble, interrupt, change their mind, or ask something unexpected.

For beauty venues, the voice agent handles booking enquiries: "I'd like a cut and colour next Thursday afternoon." The conversation flow is structured around service selection, provider preference, availability checking, and confirmation. For trades (in OpenTradie), the same underlying technology handles job enquiries: "My hot water system is leaking, can someone come today?" Completely different conversation flows, different urgency classification, different handoff logic.

This is where vertical AI earns its margin. A horizontal voice agent platform gives you the building blocks. A vertical implementation gives you the conversation flows, the domain vocabulary, the integration with scheduling and dispatch systems, and the fallback logic that matches how that specific industry actually operates. I've written a full voice agent playbook covering the product decisions in detail.

Multi-model orchestration is a product decision

One of the earliest architectural choices was routing across multiple LLMs rather than committing to a single provider. OpenRouter makes this operationally simple. The product decision is which model to use for which task.

Claude Sonnet handles complex generation: business intelligence narratives, growth coaching insights, detailed client communications. Claude Haiku handles high-volume, low-complexity tasks: classification, extraction, simple formatting. GPT-4o Mini and Gemini handle specific tasks where their cost-quality tradeoffs are optimal.

Prompt caching through OpenRouter reduced inference costs by 90%. That's not a rounding error. At scale, the difference between cached and uncached inference is the difference between a profitable AI feature and one that bleeds margin with every interaction. I've written about this tradeoff in the audit tax analysis.

The point isn't the specific models. Models change every quarter. The point is that building for orchestration rather than single-model dependence is a product architecture decision that determines your cost structure, your quality ceiling, and your vendor risk.

What this means for vertical SaaS

Three implications that most people haven't fully absorbed.

The addressable market for vertical SaaS just expanded massively. Verticals with 50,000 to 200,000 potential customers were too small to justify venture-funded development. They're not too small for a solo operator with $500/month in infrastructure costs. Beauty salons. Trade contractors. Yoga studios. Veterinary clinics. Dog groomers. Every underserved vertical with manual workflows and phone-based operations is now a viable software market. The business viability framework I use to evaluate these opportunities focuses on exactly this: unit economics at the individual operator level, not the venture-scale level.

The competitive moat shifts from code to domain depth. When code is a commodity, the moat moves to understanding the customer's operational reality. I can build the booking system in a week. Understanding that a hairdresser's schedule is fundamentally different from a massage therapist's schedule (overlapping appointments vs. sequential, different buffer times, different no-show patterns) requires domain knowledge that AI coding tools don't generate. Vertical depth is the moat.

The team size assumption is wrong. The default mental model is still "you need a team to build production software." That assumption shapes hiring plans, funding strategies, and competitive analysis. If your competitive analysis assumes your competitor needs $2M and 18 months to ship a competing product, but they actually need $500/month and 8 weeks, your strategic planning is dangerously wrong.

I'm not arguing that every vertical SaaS product should be built solo. I'm arguing that the minimum viable team has dropped from 10 people to 1, and that changes the competitive dynamics of every vertical software market on the planet. OpenChair launches this week. It's the proof point. The product builder identity isn't an aspiration. It's an economic reality. What the grind actually looks like is a different story from the one usually told about AI development.

Frequently Asked Questions

Can a solo-built product actually compete with a funded team's product?

At the feature level, yes. AI-augmented development velocity is genuinely competitive with small teams. At the go-to-market level, it depends on the vertical. Verticals where customers evaluate software through trials and word-of-mouth (local services, SMB) favour product quality over sales force size. Verticals where enterprise sales cycles dominate (healthcare, finance) still favour funded teams with dedicated sales. Choose your vertical accordingly.

What breaks first when you scale as a solo operator?

Customer support and operations. The code scales fine (that's what cloud infrastructure is for). The AI features scale fine (that's what multi-model orchestration is for). What doesn't scale is responding to customer emails, handling billing disputes, debugging edge cases in production, and managing App Store review submissions simultaneously. The first hire for a solo-built vertical SaaS product should be operations, not engineering.

Is the 90% prompt caching cost reduction sustainable?

It depends on your usage patterns. High cache hit rates require repeated similar queries, which is common in vertical SaaS (same types of requests, same domain vocabulary, similar document structures). If your usage patterns are highly variable, cache hit rates will be lower. Monitor your actual cache hit rate and factor uncached costs into your pricing model as a floor.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience shipping AI products.