Stop Picking Winners in the Model Race. Build the Router Instead.

TL;DR

- The gap between top models is narrowing while their specialisations diverge, and no single model wins every task

- Hard-coding to a specific model version is technical debt; by the time you ship, the leaderboard will have flipped

- The real competitive advantage is your routing layer and your eval framework, not your model choice

The numbers for OpenAI's GPT-5.2 just dropped. The headline stats are eye-watering. The hype cycle is about to go into overdrive.

But if you put on your product hat and look past the benchmark screenshots, the story isn't "OpenAI Won." The story is that we've officially entered the era of commoditised super-intelligence. And if you're still building your product around a single model, you're doing it wrong.

The three-horse race that never ends

We are watching OpenAI, Google, and Anthropic trade the lead every few weeks. Each new release triggers the same cycle: breathless benchmarks, hot takes about who's "winning," and pressure from stakeholders to migrate to the latest leader immediately.

Don't take the bait.

The benchmarks actually tell a more nuanced story when you read them carefully. If you look at the FrontierMath scores, Gemini 3 Pro edges out GPT-5.2 on the hardest Tier 4 problems (18.8% vs 14.6%), even though OpenAI wins the generalist categories. Anthropic's Claude continues to lead on certain reasoning and coding tasks. Each model has a distinct "flavour": strengths and weaknesses that map to different use cases.

The gap between the top models is narrowing on aggregate benchmarks, but their specialisations are diverging. This is the critical insight that the hype cycle obscures. The question isn't "which model is best?" It's "which model is best for this specific task, at this cost, at this latency?"

That's a different question entirely. And it demands a different architecture.

Why is hard-coding to a single model technical debt?

If you are hard-coding your application and prompts to a specific model version today, you are building technical debt. Not might be. Are.

I've seen this pattern repeatedly. A team spends weeks optimising prompts for GPT-4o. They tune the temperature, craft the system messages, build few-shot examples that work perfectly with that model's quirks. They ship. Three weeks later, a new model drops that's cheaper, faster, and better at the core task, but the migration cost is enormous because every prompt assumes a specific model's behaviour.

This is the AI equivalent of hard-coding database queries instead of using an ORM. It works until it doesn't, and when it breaks, it breaks everywhere at once.

The fix isn't to avoid optimisation. It's to optimise at the right abstraction layer. Your prompts should express intent. Your routing layer should handle model selection. Your eval framework should validate that the output meets your quality bar regardless of which model produced it. The entire product architecture should anticipate model capabilities that don't exist yet, so that improvements land as free upgrades rather than migration projects.

When you build this way, a new model release isn't a migration project. It's a configuration change followed by an eval run.

Orchestration is the new IP

The winning strategy isn't picking the best model. It's building the best router.

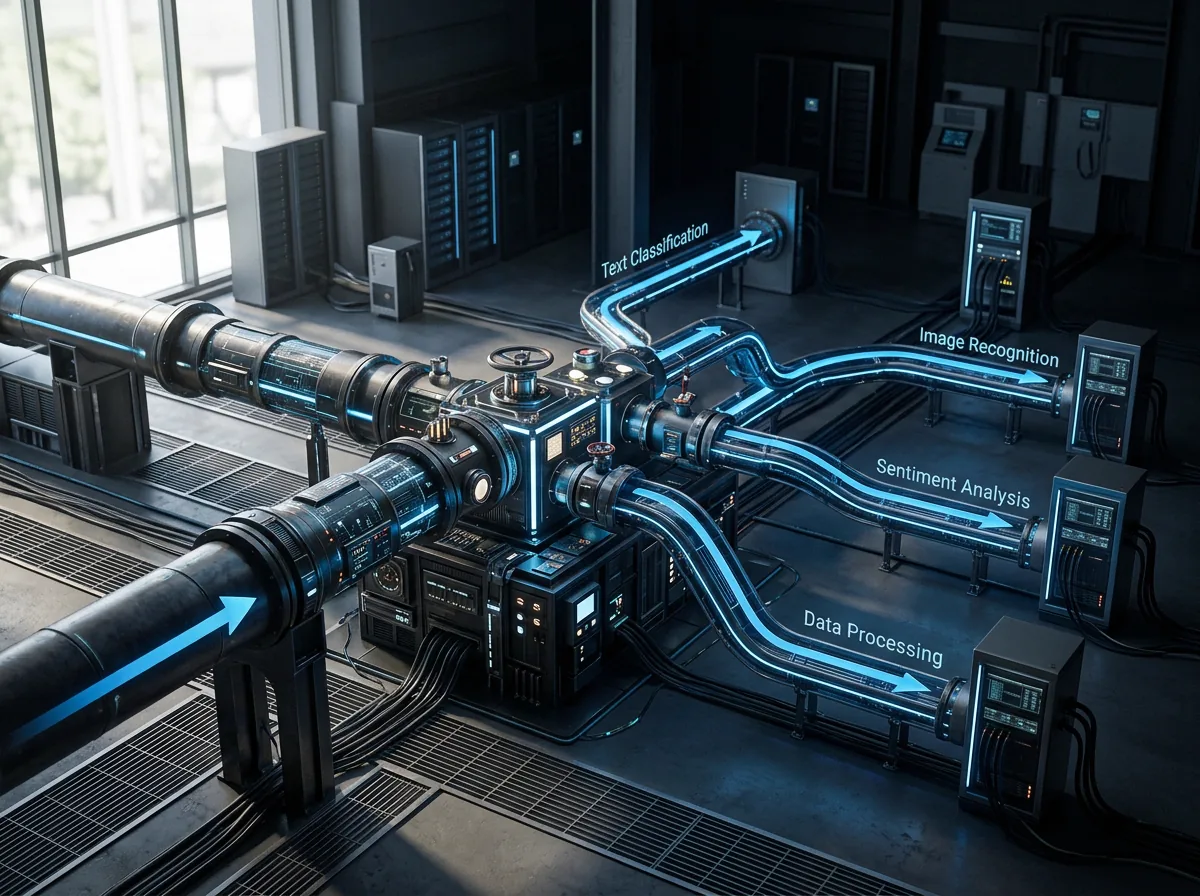

What does this look like in practice? An architecture that can dynamically send a complex reasoning task to GPT-5.2 with thinking enabled, route a simple summarisation task to a cheaper and faster model like Gemini Flash or Claude Haiku, and make that decision based on the task characteristics, latency requirements, and cost constraints, automatically, at runtime.

This is what I mean by multi-model orchestration, and it's what I've been building in production. I detail the multi-model orchestration playbook in the handbook. The routing layer becomes your competitive advantage because it encodes your understanding of the cost-quality-latency tradeoffs for your specific use cases. (The audit tax math on manager-worker architectures makes the cost dimension viscerally concrete.) That understanding is hard-won, domain-specific, and not something a model provider can commoditise away from you.

Your model provider can release a better model tomorrow. They can't release a better understanding of your users' needs.

Evals are the unlock

This all hinges on one thing. None of this works without evals.

If you don't have a rigorous evaluation framework (your own internal benchmarks, tailored to your specific use cases, not the ones provided by OpenAI or Google) you can't take advantage of these rapid model improvements. You're stuck waiting for someone else to tell you it's safe to switch.

This is the most common failure mode I see in enterprise AI. Teams that can't evaluate their own systems can't iterate on them. Every model upgrade becomes a months-long validation exercise because there's no automated way to confirm that the new model maintains or improves output quality.

The teams that move fastest are the ones who can run a new model through their eval suite in hours, compare the results against their quality thresholds, and make a data-driven decision to adopt, reject, or selectively route. They treat model selection as a continuous optimisation problem, not a one-time architectural decision.

We need to stop asking "which model is best?" and start asking "do we have the evals to prove it?"

Governance enables speed

There's a counterintuitive truth buried in all of this: governance isn't the thing that slows you down. It's the mechanism that lets you move fast.

When you have clear policies about data handling, model evaluation criteria, cost thresholds, and quality standards, switching models is a governed process with known checkpoints. Without those guardrails, every model change is an ad hoc risk assessment that requires executive sign-off and weeks of manual testing.

The organisations that will thrive in the commoditised super-intelligence era aren't the ones that pick the right model. They're the ones that build the infrastructure to continuously evaluate, route, and govern across multiple models, treating that infrastructure as a core product capability, not an operational overhead.

The model race is fun to watch. But the product builders who win won't be the ones cheering from the sidelines. They'll be the ones who built the system that doesn't care who's leading.

Frequently Asked Questions

Isn't multi-model orchestration over-engineering for most teams?

It depends on your scale and rate of change. If you're running a single AI feature with stable requirements, a single model might be fine, for now. But if you're building multiple AI features, operating in an environment where cost matters, or planning to iterate quickly, the routing abstraction pays for itself almost immediately. What matters isn't whether you need it today, but how painful migration will be when you need it tomorrow.

How do you build internal evals without a dedicated ML team?

Start with your existing quality criteria. What does "good output" look like for your specific use case? Write those criteria down as test cases. You don't need a sophisticated ML evaluation pipeline on day one. You need a structured set of inputs, expected outputs, and pass/fail criteria that you can run against any model. Frameworks like Langfuse, Braintrust, or even a well-organised spreadsheet can get you started. Sophistication comes later. The habit of evaluating comes first.

Should we wait for the model race to stabilise before investing in AI?

No. The model race isn't going to stabilise. It's going to accelerate. Waiting for a "winner" means waiting indefinitely. The right investment is in the infrastructure that makes you model-agnostic: routing, evals, and governance. Those investments appreciate in value as the model race intensifies, because they're what allow you to capture the improvements without the migration tax.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience multi-model orchestration in production.