TL;DR

- Built a multi-model orchestration layer routing 50+ AI features across six LLMs (Claude Sonnet, Claude Haiku, GPT-4o Mini, Gemini 2.0 Flash, Gemini 2.5 Pro, Gemini 2.5 Flash) via OpenRouter

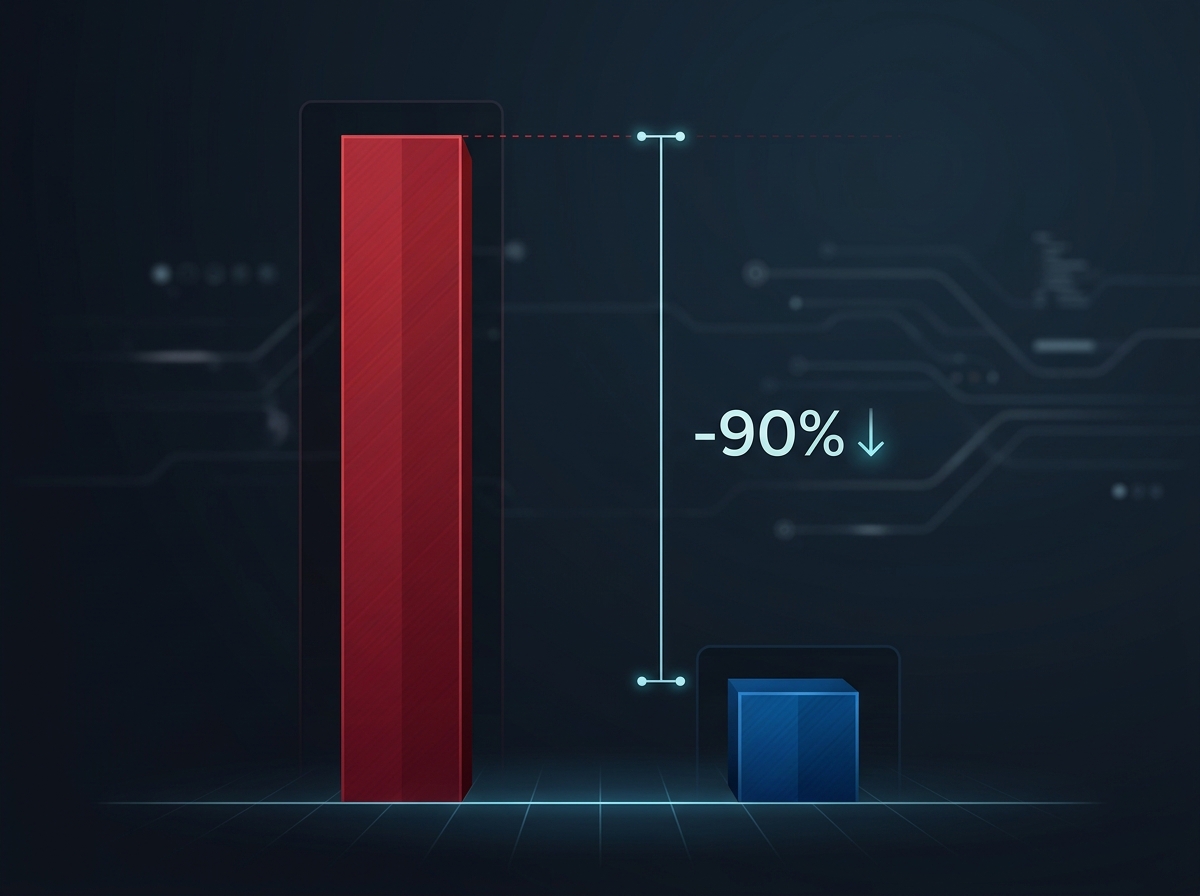

- Achieved 90% inference cost reduction through model routing and prompt caching compared to a single-model approach

- Deployed across two production platforms (OpenChair and OpenTradie) with shared orchestration patterns, eval monitoring via Langfuse, and an AI voice receptionist running on Retell AI and Twilio

The Problem

OpenChair and OpenTradie each ship 50+ AI features. These include an AI voice receptionist handling real phone calls via Retell AI and Twilio, vision-based recommendations analysing venue photos, an AI growth coach providing business performance analysis, smart scheduling with conflict resolution, automated client communications and dozens of background intelligence features.

A single-model approach creates two failure modes. Use a premium model for everything and inference costs make the product unprofitable at target price points. Use a budget model for everything and quality on critical tasks drops below acceptable thresholds. As I argue in commoditised superintelligence and the model race, the rapid pace of model releases makes single-model lock-in increasingly risky. The math is straightforward: at 50+ features with varying usage frequencies, the gap between best-model-for-everything and right-model-for-each-task is the difference between a viable product and a margin trap. I break down the full pricing implications in the AI unit economics case study.

The Approach

The orchestration layer sits between the application and the LLM providers, following the generalised multi-model orchestration framework I've documented separately. Every AI task is classified by three dimensions: quality requirement (how much output quality matters for this specific use case), latency tolerance (does the user need a response in 500ms or can it take 5 seconds), and cost sensitivity (what's the acceptable inference cost per request given expected usage volume). These three dimensions determine which model handles each task.

OpenRouter provides the routing infrastructure with a unified API across all model providers, built-in failover and prompt caching. Langfuse provides eval monitoring, cost tracking per feature and quality scoring. The Vercel AI SDK handles streaming responses and tool calling at the application layer.

Key Decisions

1. Model Assignment by Task Profile, Not Feature Category

The routing logic doesn't assign models by feature ("use Claude for the growth coach, use GPT for scheduling"). It assigns models by task profile within each feature. The AI growth coach uses Claude Sonnet for generating business analysis narratives (quality-critical, user-facing). It uses Claude Haiku for extracting metrics from booking data (high-volume extraction where speed matters more than prose quality). It uses GPT-4o Mini for routine data aggregation that runs in the background.

A single feature might use three different models depending on which subtask is executing. This granularity is where the cost savings come from. Most of the inference volume in any AI feature is background processing, classification and extraction, not user-facing generation. Routing the high-volume background work to cost-effective models while reserving premium models for the moments that matter is what makes the economics work.

2. Prompt Caching as a Cost Architecture

OpenRouter's prompt caching eliminates redundant processing of system prompts and shared context. In a multi-tenant SaaS platform, system prompts and base context are largely identical across requests for the same feature. Without caching, every request pays the full input token cost for context that hasn't changed. With caching, that cost drops to near zero for repeated context.

The impact is substantial. System prompts for complex features like the AI growth coach or voice receptionist can exceed 2,000 tokens. At hundreds of requests per day across all tenants, caching these tokens alone accounts for a significant portion of the 90% cost reduction. This isn't optimisation at the margins. It's an architectural decision that determines whether the product is financially viable.

3. The Voice Agent as a Distinct Orchestration Challenge

The AI voice receptionist (Retell AI + Twilio) operates under constraints that text-based features don't face. Latency tolerance is measured in hundreds of milliseconds, not seconds. A perceptible pause in a phone conversation signals to the caller that they're talking to a machine.

This constraint drove the voice agent to use models optimised for speed (Gemini 2.0 Flash for real-time conversation, with fallback to Claude Haiku for specific reasoning tasks). The voice pipeline has its own orchestration logic: speech-to-text, intent classification, response generation and text-to-speech all need to complete within a turn-taking window that feels natural to a human caller.

Conversation flow design matters as much as model selection. The voice agent follows defined scripts for common scenarios (booking confirmations, cancellations, availability inquiries) with graceful escalation to human handoff when conversations move outside the agent's capability boundary. These patterns draw from the agentic AI patterns framework, particularly the principles around capability boundaries and graceful degradation.

4. Shared Patterns, Domain-Specific Customisation

OpenChair and OpenTradie share the same orchestration infrastructure, the same model routing logic and the same monitoring stack. What differs is the domain layer: OpenChair handles appointment-based scheduling for beauty and wellness venues; OpenTradie handles job-based scheduling with crew dispatch, travel time and equipment tracking for trade businesses.

This separation was deliberate. The orchestration layer is a solved problem that shouldn't be re-solved for each vertical. The domain layer is where the product differentiation lives. Shipping a new vertical means writing domain logic, not rebuilding AI infrastructure. OpenChair was the first vertical to prove this pattern, and OpenTradie validated that the shared infrastructure transferred cleanly to a different domain. The economics of multi-agent systems in specialised verticals is something I explore further in audit, tax and multi-agent economics.

5. Eval Monitoring as Continuous Quality Assurance

Langfuse tracks every AI interaction: model used, token count, latency, cost and quality scores. This data serves three purposes. Cost monitoring: if a feature's inference costs trend upward (due to prompt drift, increased usage or model pricing changes), the dashboard surfaces it before it impacts margins. Quality tracking: output quality scores flag degradation that might not be visible in aggregate metrics. Model comparison: when a new model version releases, Langfuse data provides the baseline for A/B comparison on real production tasks.

Without this monitoring layer, multi-model orchestration is flying blind. Model pricing changes, prompt evolution and usage pattern shifts mean the optimal routing configuration drifts over time. What was the right model assignment six months ago might not be today. I detail the broader principles behind this in the evaluation frameworks handbook chapter, which covers how to build eval systems that keep pace with model and product evolution.

Results

- 50+ AI features powered across two production platforms

- Six LLMs orchestrated via OpenRouter (Claude Sonnet 4, Claude Haiku 3.5, GPT-4o Mini, Gemini 2.0 Flash, Gemini 2.5 Pro, Gemini 2.5 Flash)

- 90% inference cost reduction compared to single-model approach

- AI voice receptionist handling production phone calls with sub-second response times

- Shared orchestration infrastructure enabling rapid vertical expansion

- Cost per AI action tracked and monitored in real-time via Langfuse

- Zero margin-negative AI features in production

Tech Stack

OpenRouter (multi-model routing, prompt caching), Vercel AI SDK (streaming, tool calling), Langfuse (eval monitoring, cost tracking, quality scoring), OpenTelemetry (observability), Retell AI (voice agent platform), Twilio (telephony), Claude Sonnet 4, Claude Haiku 3.5, GPT-4o Mini, Gemini 2.0 Flash, Gemini 2.5 Pro, Gemini 2.5 Flash, Next.js 16, tRPC 11, Supabase (PostgreSQL)