How to Measure an AI Product (When Traditional Metrics Lie)

TL;DR

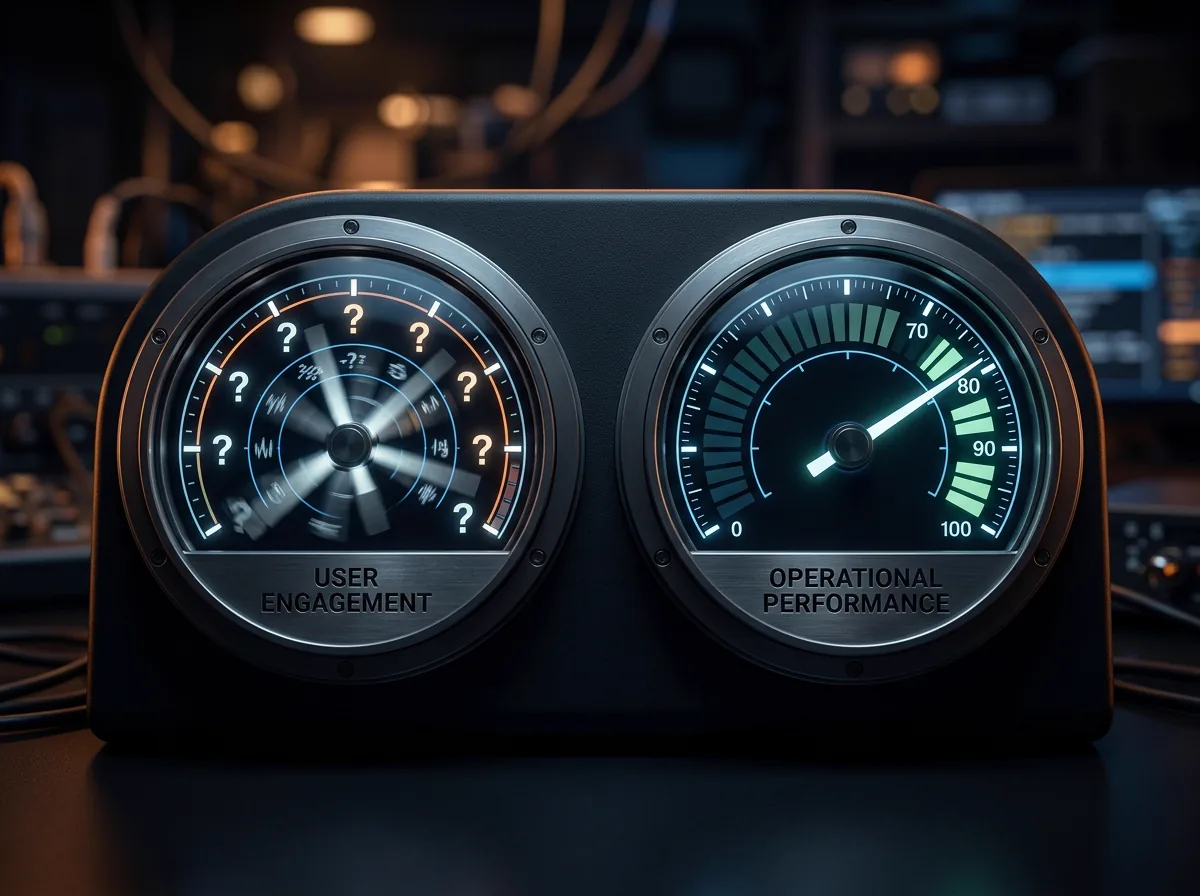

- Traditional product metrics (DAU, time-in-app, NPS) actively mislead when applied to AI products, because the best AI products reduce the need for human interaction

- AI products need a metrics stack built around task outcomes, automation rates, error costs, and confidence distributions, not engagement proxies

- The framework: measure what the AI accomplishes (output metrics), how reliably it accomplishes it (quality metrics), and what it costs to accomplish it (economics metrics)

Your best AI feature makes your engagement metrics look terrible.

A user who spent 45 minutes manually writing property descriptions now spends 3 minutes reviewing AI-generated ones. Time-in-app dropped 93%. By traditional product metrics, that user is disengaging. By any sane measure, they're getting dramatically more value from your product.

This is the measurement paradox of AI products. The metrics we've relied on for two decades of SaaS (daily active users, session duration, feature engagement, Net Promoter Score) were designed for products where humans do the work. They measure how much time humans spend in the product, how often they come back, and how they feel about the experience.

AI products are supposed to reduce human effort. The better they work, the less humans need to interact with them. Measuring an AI product by engagement metrics is like measuring a dishwasher's quality by how long you stand at the sink.

Why do traditional product metrics mislead for AI?

DAU/MAU goes down when AI works. If your AI agent resolves support tickets autonomously, your support team logs in less often. DAU drops. But the tickets are getting resolved faster and more consistently. The product is working better. The metric says it's working worse.

Time-in-app drops when AI saves time. The entire value proposition of most AI features is "spend less time on this task." If you're measuring time-in-app and the number goes down after an AI feature launch, is that success or failure? It's success. Your metric says failure.

NPS misreads AI sentiment. Users rate AI features based on their worst experience, not their average experience. One hallucinated output in 50 interactions can crater the NPS for an AI feature that's 98% accurate. NPS captures sentiment about memorable moments, not systematic performance.

Feature engagement conflates trial with value. "30% of users tried the AI feature" is adoption, not value. Of that 30%, how many got a useful output? How many used it again? How many reverted to the manual workflow? Engagement metrics don't distinguish between "tried it once out of curiosity" and "relies on it daily."

None of these metrics are wrong in isolation. They're measuring real things. They're just measuring the wrong things for an AI product, and optimising for them will lead you to make your AI features worse (more intrusive, more notification-heavy, more engagement-seeking) rather than better (more autonomous, more accurate, more invisible).

The AI product metrics framework

AI products need three layers of measurement: output metrics (what the AI accomplishes), quality metrics (how reliably it accomplishes it), and economics metrics (what it costs).

Layer 1: Output metrics

These measure the work the AI actually does.

Task completion rate. Of the tasks the AI attempted, what percentage did it complete successfully without human intervention? This is the single most important metric for any AI feature. A support agent that resolves 72% of tickets autonomously is delivering measurable value. One that resolves 15% is a glorified search bar.

Automation rate. What percentage of eligible tasks are being handled by the AI rather than by humans? If your AI feature is capable of handling 80% of a workflow but only 20% of tasks are routed to it, you have an adoption problem, not a capability problem. The adoption playbook applies.

Throughput. How many tasks does the AI process per unit of time? For a document processing agent: documents per hour. For a customer support agent: tickets per hour. For a data extraction agent: records per minute. Throughput lets you calculate the AI's equivalent human capacity and anchor your value proposition in concrete terms.

Escalation rate. What percentage of tasks does the AI route to a human because it can't handle them? This is the inverse of task completion rate, but tracking it separately matters because escalation patterns reveal systematic gaps. If 40% of escalations involve the same type of query, that's your next improvement target.

Layer 2: Quality metrics

These measure whether the AI's output is actually good.

Accuracy by task type. Not a single accuracy number. Break it down by the categories of work the AI handles. Your AI might be 95% accurate on data extraction but 70% accurate on free-text generation. A single blended accuracy number hides the 70% behind the 95% and prevents you from making targeted improvements.

Confidence distribution. Plot the distribution of confidence scores across all AI outputs. A healthy distribution has a strong peak at high confidence (the AI knows what it's doing most of the time) with a thin tail at low confidence (uncertainty is rare and well-calibrated). A flat distribution means the AI doesn't really know when it's right or wrong, which makes the spot-check architecture unreliable.

Override rate. When humans review AI output, how often do they change it? An override rate between 10% and 30% is typically healthy: users are engaged and making targeted corrections. Below 5% in high-stakes domains suggests users aren't checking (rubber-stamping). Above 50% suggests the AI output isn't good enough to be useful as a starting point.

Error severity distribution. Not all errors are equal. An AI that occasionally gets the date format wrong is different from one that occasionally invents a number. Classify errors by severity (cosmetic, functional, critical) and track each category independently. Zero tolerance for critical errors. Tolerance for cosmetic errors. This tiered approach prevents over-investment in low-severity corrections while ensuring high-severity errors get immediate attention.

Regression detection. AI systems can degrade silently. A model update, a data drift, a prompt change can reduce quality without any obvious error. Run your eval suite continuously against production outputs. Track quality metrics over time. Set alerts for degradation beyond your threshold. Without regression detection, you're flying blind.

Layer 3: Economics metrics

These measure whether the AI is worth running.

Cost per task. Total inference cost (including any manager-worker audit cost) divided by tasks completed. This is your unit economics foundation. If cost per task exceeds what you can charge or what a human costs, the feature is margin-negative regardless of how well it works.

Cost per quality level. What does it cost to achieve 90% accuracy vs 95% vs 99%? The cost curve is typically exponential, not linear. Going from 90% to 95% might cost 2x more (higher audit rates, better models). Going from 95% to 99% might cost 5x more. Understanding this curve lets you offer tiered pricing based on reliability.

Human-equivalent cost ratio. What does the AI cost compared to a human doing the same task? If a human processes 40 invoices per hour at a fully loaded cost of $40/hour, the human cost is $1 per invoice. If the AI processes the same invoice at $0.05, the cost ratio is 20:1 in favour of the AI. This ratio is the foundation of your value proposition and your pricing headroom.

Marginal cost trend. Is your per-task cost going up or down over time? With prompt caching, model improvements, and infrastructure optimisation, it should trend down. If it's trending up (more complex queries, higher audit rates, model price increases), you have a scaling problem to address before it hits your margin.

Putting it together: the AI product dashboard

A useful AI product dashboard has three sections:

Section 1: Is the AI working? Task completion rate, automation rate, throughput. These tell you whether the AI is doing its job. If these numbers are flat or declining, the AI feature needs engineering attention.

Section 2: Is the AI good? Accuracy by task type, confidence distribution, override rate, error severity, regression alerts. These tell you whether the AI's output is trustworthy. If these numbers are degrading, the AI needs eval attention.

Section 3: Is the AI profitable? Cost per task, cost per quality level, human-equivalent cost ratio, marginal cost trend. These tell you whether the AI is commercially viable. If these numbers are unfavourable, the feature needs architectural or pricing attention.

Traditional product dashboards mix engagement and satisfaction metrics. AI product dashboards separate capability, quality, and economics. Each section has different owners (engineering, data science, product/finance) and different cadences (daily for capability, weekly for quality, monthly for economics).

The meta-metric: replacement rate

There's one metric that sits above the framework: replacement rate. Of all the work your users do, what percentage has been replaced by AI?

Replacement rate is the ultimate measure of an AI product's value. A product with 50+ AI features but a 5% replacement rate has built a lot of AI and delivered little value. A product with 3 AI features and a 40% replacement rate has transformed how its users work.

This is the metric your board should care about. Not "how many AI features did we ship" (vanity). Not "what's our AI feature adoption rate" (incomplete). But "how much of the work that humans used to do is now done by AI, reliably, at lower cost?"

If you can't answer that question with a number, you don't know whether your AI investment is paying off.

Frequently Asked Questions

How do you establish baselines for AI metrics when you don't have historical data?

Start measuring before the AI feature launches. Track the human-driven baseline: how many tasks per hour, what error rates, what cost per task. Then launch the AI and measure the same metrics. The delta between human baseline and AI performance is your ROI story. Without the baseline, you can't prove the AI feature created value.

What sample size do you need for reliable AI quality metrics?

For accuracy measurements, 200 to 500 evaluated outputs give you statistically meaningful results for most use cases. For confidence calibration, you need more (1,000+) because you're measuring the relationship between predicted confidence and actual accuracy across the distribution. For regression detection, continuous sampling at 5% to 10% of production volume is a reasonable starting point. Scale up if you detect anomalies.

How do you handle metrics for AI features where the output quality is subjective?

For subjective outputs (generated text, creative content, recommendations), use human evaluation on a rubric. Define 3 to 5 quality dimensions (accuracy, relevance, tone, completeness) and score each on a 1 to 5 scale. Average across evaluators. Track the distribution, not just the mean. A mean score of 3.5 could mean "consistently adequate" or "half brilliant, half terrible." The distribution tells you which.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience evaluation frameworks at OpenChair.