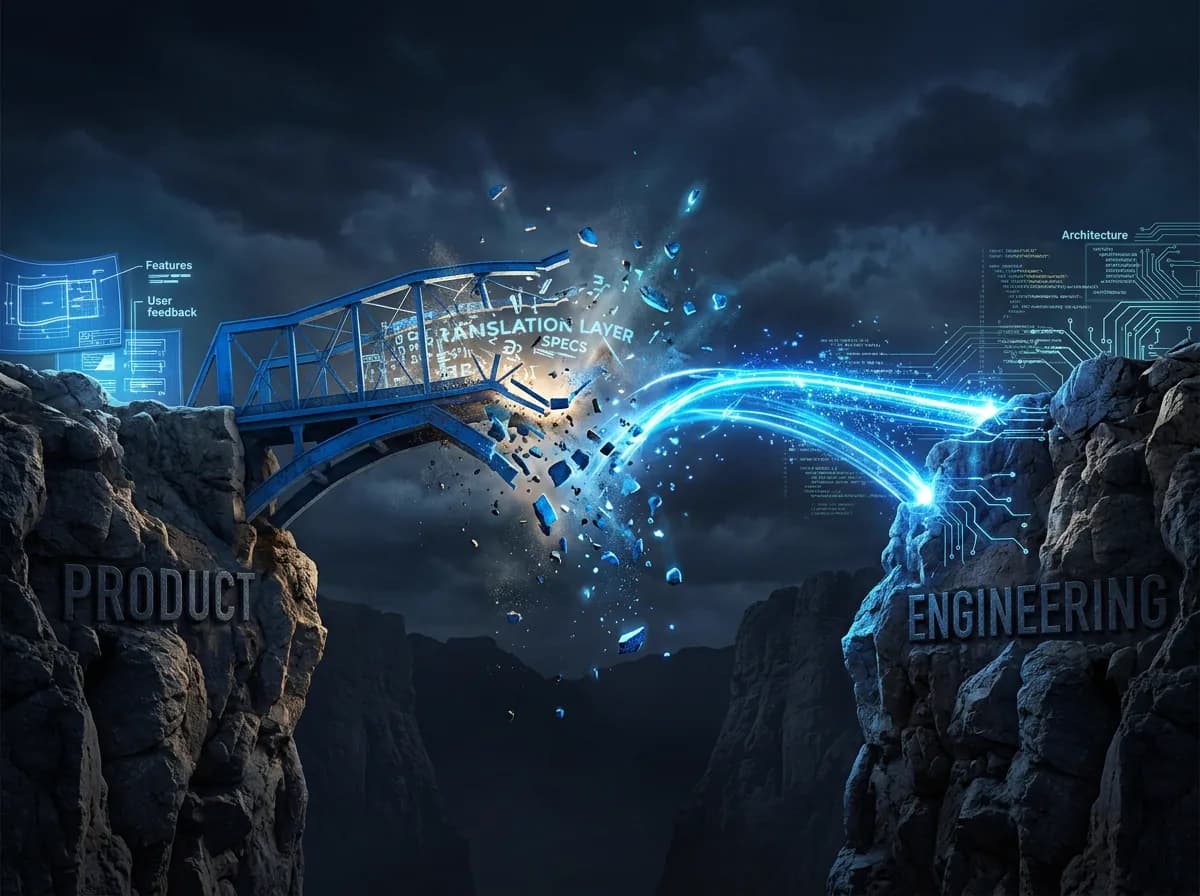

Product Discovery Is Dead: Why Prototyping Replaced It

TL;DR

- AI coding tools collapsed the gap between "should we build this?" and "here, try it" from months to hours

- Traditional discovery (interviews, surveys, prototypes-that-aren't-really-prototypes) optimised for a world where building was expensive, so you researched first to reduce waste

- The new discovery loop is: build the thing, put it in front of users, measure what happens, decide whether to invest further

Product discovery was designed to answer a question: "Is this worth building?"

The question made sense when building was expensive. A six-week discovery sprint that prevented a six-month engineering dead-end was a rational investment. Spend $50K on research to avoid wasting $500K on the wrong feature. The economics were obvious.

Those economics no longer hold.

When I can go from idea to working prototype in a weekend (with real data, real UI, real interactions, not a Figma mockup with "imagine this button does something"), the cost of building has dropped below the cost of traditional discovery. The research phase that was supposed to save money now costs more than just building the thing and testing it.

This isn't hypothetical. I built two production SaaS platforms using AI tools at every layer of the stack. Not prototypes. Production software. The build velocity that made that possible also makes traditional discovery cadences look like bureaucratic theatre.

How much does a traditional discovery sprint actually cost?

Let me describe the standard discovery process at most product organisations I've worked with or advised.

Week 1 to 2: Problem framing. Stakeholder interviews. Competitive analysis. "Opportunity sizing." Multiple documents that synthesise what everyone already suspects.

Week 3 to 4: User research. Recruit participants (this alone can take a week if your research ops aren't mature). Conduct 8 to 12 interviews. Synthesise findings into themes. Present to stakeholders.

Week 5 to 6: Solution exploration. Wireframes. Design concepts. Maybe a clickable prototype in Figma. Usability testing of the prototype. Refinement. Final recommendation.

Total elapsed time: 6 weeks. Total cost: 1.5 to 2 full-time equivalents for 6 weeks, plus participant incentives, plus the opportunity cost of not building anything else during that period. Call it $40K to $80K fully loaded.

And at the end, what you have is a recommendation. A hypothesis. An informed guess about whether this feature is worth building and what it should look like. You still haven't built anything. The engineering work hasn't started.

I'm not saying this process is always wrong. For high-stakes, high-ambiguity problems (new market entry, platform architecture decisions, regulatory compliance), deliberate research is worth the investment. You don't vibe-code your way into a regulated market.

But for the vast majority of product work (new features, workflow improvements, UX experiments, integration opportunities), the discovery tax is now higher than the build cost. That's a broken incentive.

The prototype is the research

When build cost drops below research cost, the rational move is to make the build the research.

This isn't "move fast and break things." It's disciplined experimentation with a lower-cost instrument. The prototype isn't a throwaway artifact that exists to inform a specification. The prototype IS the specification. If users engage with it, you iterate. If they don't, you've learned something in days instead of weeks, at a fraction of the cost.

The workflow looks like this:

Day 1: Build. Take the problem hypothesis and build a working version. Not wireframes. Not a clickable mockup. A functional prototype with real data and real interactions. AI coding tools make this possible for product people who couldn't previously write production code. That's the product builder shift in practice.

Day 2 to 3: Test. Put it in front of 5 to 8 real users. Not a usability test of a mockup. A trial of a working product. Watch what they do. Listen to what they say. Measure what happens. The signal quality from a working prototype is categorically different from the signal quality of a conceptual walkthrough.

Day 4 to 5: Decide. Based on real usage data (not interview responses about hypothetical willingness), make the call. Kill it, iterate it, or invest in production-hardening it.

Total elapsed time: one week. Total cost: one person's time for a week, plus whatever cloud and AI inference costs the prototype incurred (negligible). Call it $3K to $5K fully loaded.

You just compressed a $60K, 6-week discovery process into a $4K, 5-day build-and-test cycle. And the output is higher quality, because you tested a real product instead of a concept.

Why the signal is better

There's a well-known problem in user research: people are unreliable narrators of their own behaviour. Ask someone "Would you use this feature?" and they'll say yes because they want to be helpful, because they can imagine a scenario where they might, because saying no feels rude.

Show someone a working feature and watch whether they actually use it. That's a different signal entirely.

When I built the AI growth coach in OpenChair, I could have spent weeks interviewing salon owners about whether they'd value AI-generated business insights. They would have said yes. Of course they would. "Would you like a smart assistant that tells you how to grow your business?" Nobody says no to that.

Instead, I built it. Put it in front of venue owners. Watched what happened. Some features got immediate, enthusiastic adoption. Others got polite indifference. The pattern wasn't what interviews would have predicted. The features that resonated were the mundane operational ones (automated reminders, smart scheduling suggestions), not the flashy strategic ones (growth analysis, competitive benchmarking).

That's the kind of insight you only get from real usage. It challenges the product instinct that says "the exciting feature is the valuable one." Often the boring feature is the one that actually changes behaviour.

What discovery becomes

Discovery doesn't disappear. It transforms.

Problem discovery stays. Understanding the customer's world, their pain points, their workflows, their language. This still requires conversations, observation, and empathy. AI doesn't replace the need to understand your customer. It accelerates how quickly you can test whether your understanding is correct.

Solution discovery collapses into building. The "should we build option A or option B?" question gets answered by building both and measuring which one users prefer. When prototyping cost approaches zero, A/B testing prototypes becomes cheaper than debating which one to build.

Validation becomes continuous. Instead of a discrete "discovery phase" followed by a "delivery phase," you get a continuous loop. Build a small thing. Test it. Learn. Build the next small thing. The PM skillset shifts from "research analyst who writes specifications" to "context curator who runs rapid experiments." I formalise this loop in the dual backlog system in the handbook.

The teams I've seen adapt fastest are the ones that stopped treating discovery and delivery as separate phases and started treating them as a single loop with a faster cycle time. The ones that are struggling are the ones defending the 6-week discovery sprint because "we need to be rigorous." Rigour isn't about process length. It's about evidence quality. A working prototype tested with real users is more rigorous than a beautifully formatted research deck based on 10 interviews.

The hard part nobody talks about

There's a skill gap that makes this transition difficult. The product manager who's brilliant at research synthesis, stakeholder interviews, and specification writing may not be comfortable building a working prototype in a weekend. That's a real gap, and pretending it doesn't exist is dishonest.

But the gap is closing fast. AI coding tools (Claude Code, Cursor, Copilot) have lowered the bar for "can build a functional prototype" from "professional software engineer" to "understands the problem and can describe what they want." You don't need to be a developer. You need to be able to articulate a clear product vision and iterate on AI-generated code.

The product leaders who embrace this are going to run circles around the ones who don't. Not because they're smarter. Because their feedback loop is 10x faster. And in product development, speed of learning is the only sustainable advantage.

Stop reading about this shift. Start shipping.

Frequently Asked Questions

Doesn't this just produce sloppy, unconsidered features?

Only if you skip the evaluation step. "Build fast" doesn't mean "ship without thinking." It means "get to testable artifacts faster." The discipline shifts from "think carefully before building" to "build quickly, evaluate rigorously, kill fast." The net result is more features tested and fewer bad features shipped, because you're making decisions based on real usage data instead of hypothetical research.

What about accessibility, performance, and production quality?

Prototypes built for testing don't need production-grade accessibility or performance. They need to be functional enough to test the core hypothesis. If the hypothesis validates, you invest in production-hardening: accessibility audits, performance optimisation, edge case handling. The prototype-first approach front-loads learning and back-loads polish. That's the right sequence.

Doesn't this require product managers to code?

It requires product managers to build, which is increasingly possible without traditional coding skills. AI coding tools can generate functional prototypes from detailed descriptions. The PM's job is to describe the problem clearly, evaluate the output critically, and iterate on the direction. That said, PMs who develop basic technical literacy will get better results from AI tools. Understanding what a database schema looks like, how an API works, and what "state management" means makes you a better collaborator with AI coding assistants.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience building production AI platforms solo.