The Cartel Problem: Why AI Stalls at the Industry Gate

TL;DR

- AI adoption is blocked less by technical limitations than by structural barriers: regulatory capture, professional guilds, procurement inertia, and incumbents who profit from the status quo

- I spent nine years shipping products into AFSL-regulated environments with Tier 1 banks, where compliance was the binding constraint, not capability

- The product leaders who succeed in regulated industries will be the ones who learn to navigate structural resistance, not just technical complexity

Every AI product conference features the same narrative. The technology is ready. The models are capable. The ROI is proven. Adoption should follow.

It doesn't. And the reason isn't hallucination rates or eval coverage or inference costs. Those are real problems, but they're solvable problems. Engineers are solving them right now.

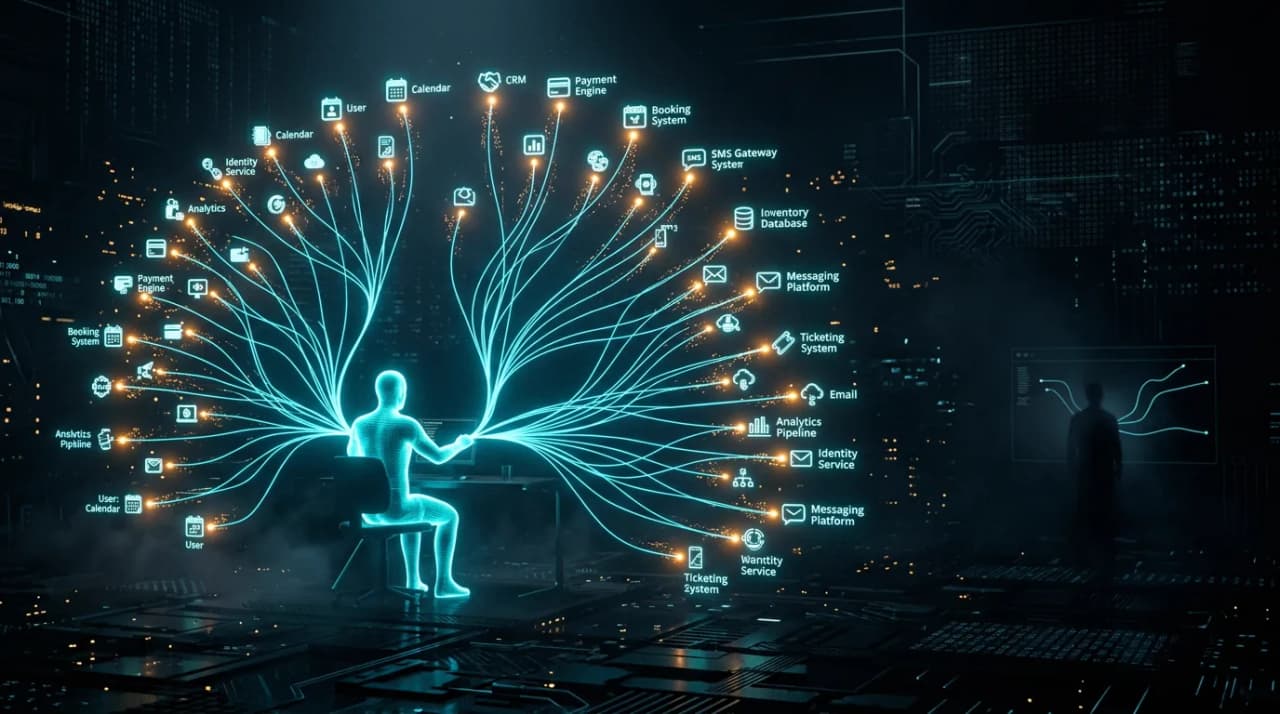

The harder problems are structural. Industries have immune systems, and they're working exactly as designed: to resist change.

The immune system

I spent nine years building and shipping products into Australia's financial services sector. My clients included CBA, NAB, and ANZ. Every product touched regulated workflows: property valuations, risk assessments, lending decisions, compliance reporting. AFSL-regulated environments where a product error isn't a bug, it's a potential breach.

The technical work was straightforward relative to the structural work. Building an AI-assisted property valuation was an engineering problem. Getting it approved for use in a lending decision was a political one. And the politics operated at multiple levels simultaneously.

The regulator. APRA and ASIC don't move fast. They shouldn't. Their job is to protect consumers and maintain system stability. But the regulatory feedback cycle for novel AI applications in financial services is measured in years, not months. By the time guidance is issued, the technology has moved on. This creates a permanent lag where the safest regulatory position is "don't use it yet."

The compliance function. Internal compliance teams at Tier 1 banks are measured on risk reduction, not innovation enablement. An AI system that's 99% accurate and 1% wrong is, from a compliance perspective, a system that's wrong 1% of the time. A human-only process that's 95% accurate but follows documented procedures is, from a compliance perspective, compliant. The incentive structure rewards process adherence over outcome quality.

The professional guild. Valuers, actuaries, financial advisers: each profession has a guild or association that controls accreditation, sets standards, and lobbies on regulation. These guilds serve legitimate purposes. They also function as cartels that resist any technology threatening their members' relevance. When we introduced AI-assisted valuation tools, the pushback wasn't about accuracy. It was about jurisdiction: "Can a machine make this determination, or must a qualified valuer sign off?" Real estate is a textbook case: Google's move into native property listings threatens the aggregator model, but the guild and regulatory structures have kept incumbents insulated for over a decade.

The procurement machine. Enterprise procurement at major banks takes six to eighteen months. Vendor risk assessments, security reviews, integration architecture reviews, data sovereignty checks, third-party audit requirements. Each step is individually reasonable. In aggregate, they form a barrier that favours incumbents with existing contracts over new entrants with better technology.

None of these are technical problems. They're structural barriers. And they exist in every regulated industry: healthcare, legal, education, insurance, government.

The cartel dynamic

Calling these structures "cartels" sounds provocative, but the economic behaviour matches. A cartel is a group of independent actors who coordinate (formally or informally) to restrict competition and maintain pricing power. That describes how many professional and regulatory structures operate, even when the individual participants are acting in good faith.

A hospital system that requires AI diagnostic tools to be supervised by a licensed physician isn't necessarily wrong. But it's creating a structural dependency on the physician guild that limits how AI can be deployed, regardless of whether the AI outperforms the physician on the specific diagnostic task. The physician's licence becomes a toll booth, not a quality gate.

A legal profession that restricts AI-generated contract review to "advisory only" status, requiring a qualified lawyer to sign off, isn't necessarily wrong either. But it maintains the lawyer's billing rate as the binding cost floor for legal services, regardless of whether the AI review is more thorough and consistent than what a junior associate produces.

These structures don't need to be coordinated to function as barriers. They just need to exist. The regulatory framework, the professional accreditation system, the procurement process, and the insurance liability model all independently create friction that compounds into near-impermeability for AI adoption at the industry level.

How should product teams navigate structural barriers to AI adoption?

If you're building AI products for regulated industries, technical capability is table stakes. The real product work is structural navigation. The business viability framework I use starts here: can you navigate the structural barriers in your target market, and does the unit economics still work once compliance costs are factored in? This requires a different playbook.

Design for the compliance case, not the user case. Your first customer isn't the end user. It's the compliance officer who has to approve the deployment. If you can't articulate why your AI system is auditable, explainable, and within regulatory boundaries, you don't have a product. You have a demo. The risk-tiered governance framework I developed at Cotality was specifically designed to give compliance teams a structured way to say "yes" rather than defaulting to "no."

Co-design with the guild, not against it. When we introduced AI-assisted valuation tools, the breakthrough wasn't proving the AI was accurate. It was involving valuers in the design process so the tool augmented their expertise rather than threatening it. The final product was positioned as "making valuers faster and more consistent," not "replacing valuers." Same technology. Different framing. One gets adoption. The other gets blocked.

Build the audit trail as a feature, not an afterthought. In regulated environments, the ability to explain every AI decision is a product requirement, not a nice-to-have. This means trace logging, confidence scoring, human-override paths, and version-controlled prompt management. These aren't overhead. They're the features that get you through procurement.

Time your market entry to regulatory catalysts. Structural barriers erode during regulatory transitions. When APRA updated its prudential standards, there was a brief window where banks needed new tooling to comply and were willing to evaluate solutions they wouldn't have considered six months earlier. The best time to enter a regulated market isn't when your product is ready. It's when the regulatory landscape is shifting.

The atoms-versus-bits problem

There's a broader version of this argument. Technology has moved fast in the digital world for decades. In the physical world, the built environment, healthcare, education, legal systems, infrastructure, progress has stalled. The regulatory and structural frameworks that govern atoms-based industries haven't evolved at the pace that technology demands.

AI hits this wall harder than previous technologies because AI's biggest potential impact is in atoms-based industries. Healthcare, where AI diagnosis could save lives. Legal services, where AI could make justice accessible. Education, where AI could provide one-to-one tutoring at scale. Construction, where AI could reduce cost overruns. These are the domains where AI's economic value is highest and where structural barriers are thickest.

The digital-native industries (SaaS, media, advertising) will adopt AI fastest because they have the thinnest structural barriers. The atoms-heavy industries will adopt AI slowest despite having the most to gain. This creates a paradox where AI's transformative potential is inversely correlated with its adoption speed.

Product leaders who understand this will avoid the trap of building for the easiest market. The biggest opportunities are behind the thickest barriers. I'm testing this thesis directly with solo-built vertical SaaS for physical-work industries: beauty venues and trade contractors, where the structural barriers are real but the build cost has dropped enough to make them worth navigating. Getting through those barriers requires structural literacy, not just technical capability.

The long game

I don't expect structural barriers to disappear. Regulation serves real purposes. Professional standards exist for good reasons. Procurement processes protect organisations from genuine risks.

What I expect is erosion. Demographic pressure (fewer young professionals entering guilded professions), economic pressure (AI-enabled competitors in less regulated jurisdictions), and political pressure (governments realising their regulatory frameworks are creating competitive disadvantage) will gradually weaken the barriers.

The organisations that position themselves on the right side of that erosion, building compliant AI products that work within current structures while being ready to scale as structures evolve, will capture disproportionate value.

The ones waiting for the barriers to drop before they start building will find that the market has already been won by the time they enter. The deployment gap isn't just about organisational readiness. It's about the structural immune systems of entire industries resisting the change.

The technology is ready. The structures aren't. That gap is the product opportunity of the decade.

Frequently Asked Questions

Is this a problem unique to Australia or does it apply globally?

The specific regulatory bodies differ, but the structural pattern is universal. The FDA in the US, the GMC in the UK, FINMA in Switzerland: every regulated jurisdiction has professional guilds, regulatory frameworks, and procurement processes that create similar barriers to AI adoption. The pattern is structural, not geographic.

Should AI companies avoid regulated industries?

No. Regulated industries represent the largest addressable markets and the most durable competitive advantages once you're established. The barriers that make entry hard also make displacement hard. The strategy is to invest in structural navigation capability as a core competency, not to avoid the sectors where it's needed.

How long until structural barriers meaningfully erode?

Industry by industry, five to fifteen years for significant erosion. Financial services is moving fastest because the economic pressure is strongest and the regulatory bodies are relatively sophisticated. Healthcare will take longer because the liability model (malpractice risk) creates a structural barrier independent of regulation. Legal will change faster than most expect because AI contract review is already demonstrably superior and the client base (corporate counsel) has the sophistication to demand it.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience enterprise AI at Cotality.