The Winning Agent Had No Better Model. It Got Shit Done.

TL;DR

- The fastest-growing AI tool of 2026 didn't win on model quality. It won by treating the model as a reasoning engine for persistent, local actions instead of another chat window

- The architectural choices that drove adoption (filesystem access, terminal execution, local memory) are a blueprint for where enterprise agents need to go

- Giving autonomous agents real system access forces hard questions about sandboxing and security that the industry has been deferring

This might be the most compressed product lifecycle in modern tech.

OpenClaw started as a weekend build. Open-source release. Viral adoption. Over 145,000 GitHub stars. An acqui-hire by OpenAI to drive their multi-agent strategy. The whole arc — from side project to industry-defining tool to foundation-backed independent project — happened in weeks, not years.

The speed is undeniable and worth noting. But if you look past the viral metrics, the real story isn't about velocity. It's about architecture. And it carries a lesson that every product builder working on AI agents needs to internalise.

OpenClaw didn't win because it had a novel foundation model. It didn't win because it had better benchmarks. It won because it solved the gritty reality of execution. It didn't chat about tasks. It completed them.

What is the execution gap in enterprise AI?

Enterprise AI is currently stuck in the chat window.

I've written about this before: the AI detour problem, the blank page problem, the gap between conversational AI and actual work. But it's worth stating plainly: most AI tools today are sophisticated conversation partners that produce text. They draft. They suggest. They summarise. They explain. And then the user has to take that text and manually do something with it.

The tool that broke out did something different. It treated the model not as a conversational interface, but as a reasoning engine for persistent, local actions.

The distinction matters enormously.

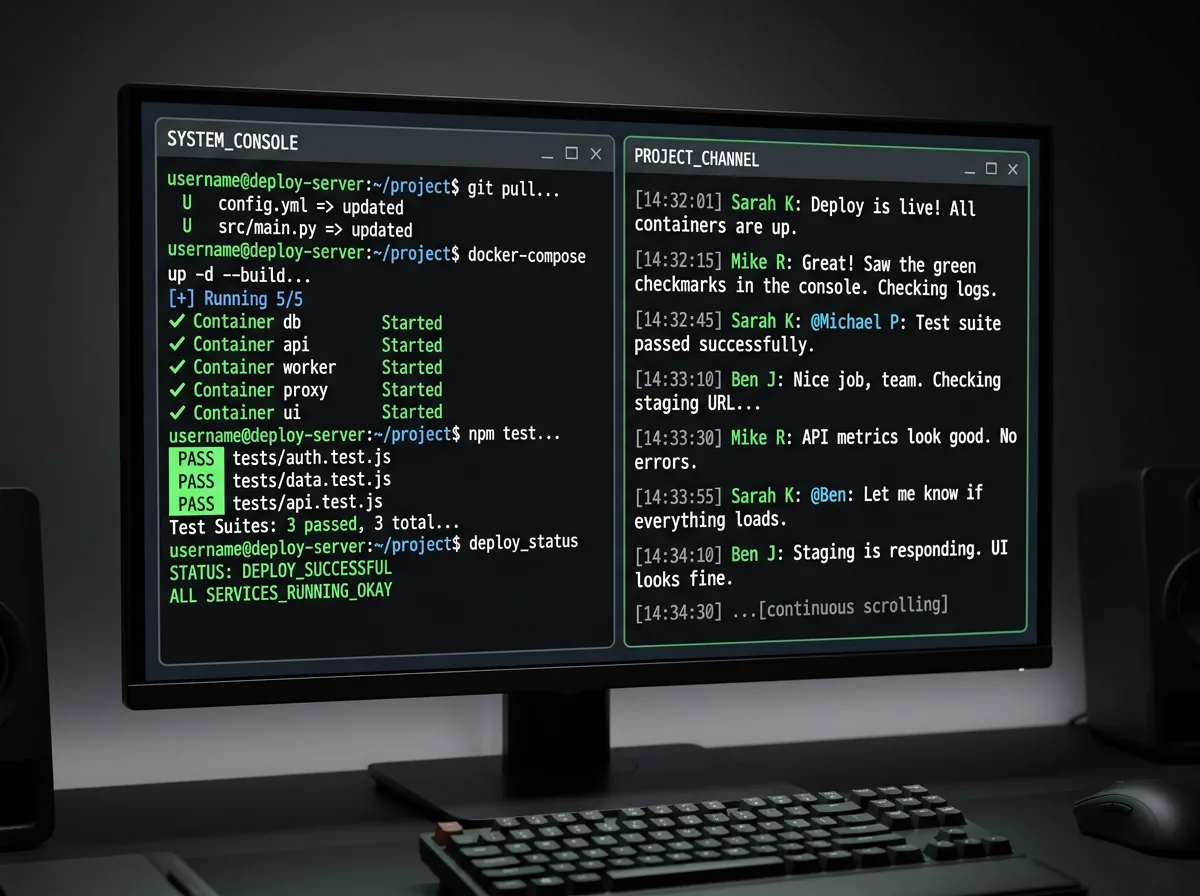

A chat agent generates a deployment script and shows it to you in a conversation window. You copy it. You paste it into your terminal. You run it. You deal with the errors. You go back to the chat. You explain what happened. You get a revised script. You copy, paste, run again.

An execution agent hooks directly into your terminal and runs the script. It sees the output. It sees the errors. It fixes them. It runs again. The loop is closed. The human isn't a clipboard.

This is the difference between an agent that talks about work and an agent that does work. And the market's reaction (six-figure GitHub stars in weeks) tells you exactly which one users actually want.

Why local matters

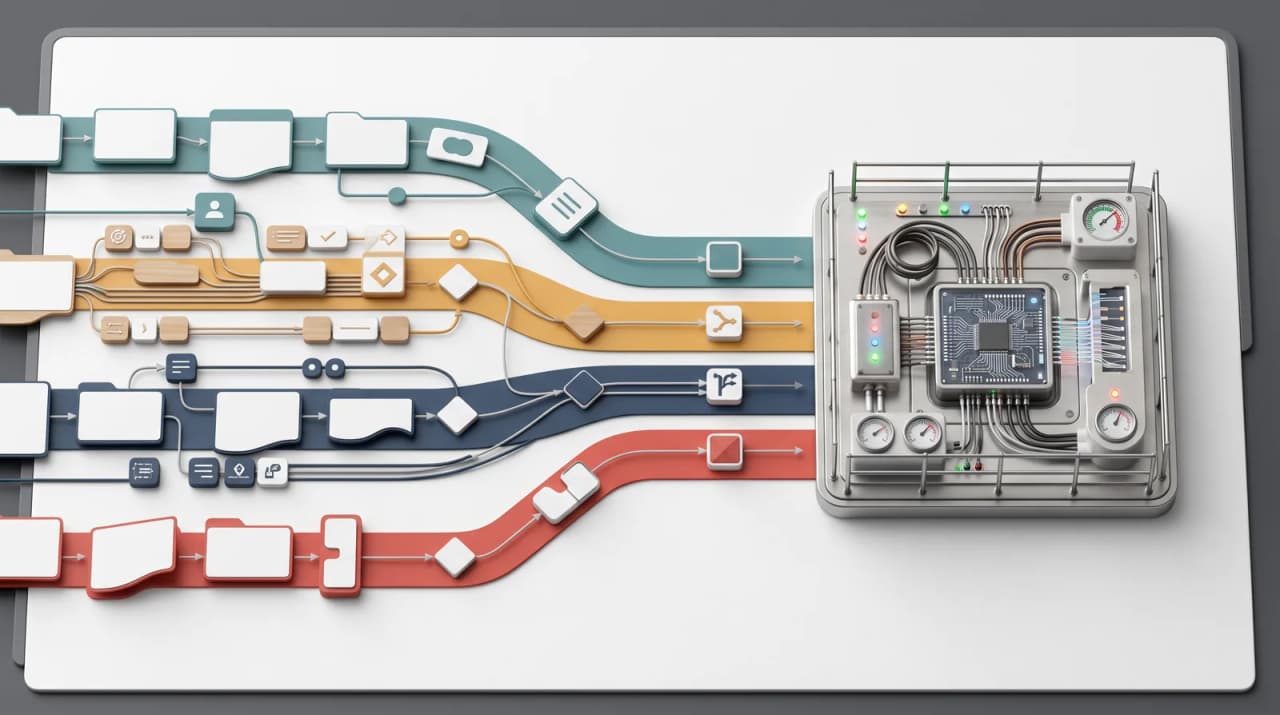

Three architectural decisions defined OpenClaw's success, and all three are instructive for product builders.

Direct system integration

The tool hooks into local filesystems, terminals, and system processes. It doesn't just reason about your code. It reads it from disk, edits it, and runs it. It doesn't just suggest a command. It executes the command and processes the output.

This seems obvious in hindsight, but it's a departure from how most AI tools are built. Most AI products operate in a sandboxed cloud environment, isolated from the user's actual working context. They work with whatever text the user pastes in. The local-first approach inverts this: the agent lives where the work lives.

For product builders, the implication is clear. The closer your agent is to the actual system of execution (the filesystem, the database, the API, the terminal) the less friction exists between reasoning and action. Every layer of abstraction between the agent and the work is a handoff where value leaks.

Persistent memory

The tool stores interaction history locally. It remembers what you were debugging yesterday. When you start a new session, you don't cold-start from zero. The context from previous sessions carries forward.

This solves what I'd call the cold-start UX problem that plagues every chat-based AI tool. Every new conversation starts from nothing. You re-explain your project. You re-describe your constraints. You re-establish context that the tool should already know. It's like working with a colleague who gets amnesia every night.

Persistent local memory changes the interaction model fundamentally. The agent accumulates context over time. It knows your codebase, your preferences, your patterns, your common mistakes. Each session starts from a richer baseline than the last. The agent gets more useful the longer you use it, a genuine flywheel effect that drives retention.

Real execution with real consequences

This is where it gets interesting, and uncomfortable.

Giving an autonomous agent terminal access is an operational nightmare. It can read, write, and execute. It can modify files. It can run commands with real side effects. It can touch production systems if you let it.

This capability is what makes the tool powerful. It's also what makes it dangerous. And the project's rapid evolution forced a maturation in sandboxing, permission models, and prompt injection defence that the broader industry has been deferring.

Most AI products have avoided this problem by keeping agents in a conversational sandbox where they can't actually do anything. That's safe. It's also why those products feel like toys. The tool that broke out accepted the risk of real execution and invested in the security architecture required to make it manageable.

For product builders, this is the uncomfortable truth: the agents that users actually want are the ones with real system access. Building those agents responsibly requires solving hard security and governance problems that most teams haven't confronted yet.

The industry signal

OpenAI's acqui-hire — bringing OpenClaw's creator in-house to drive their multi-agent strategy — signals a definitive industry pivot.

The pivot is from single-prompt chat to multi-agent, persistent workflows. From agents that converse to agents that execute. From cloud-hosted reasoning to local-first action. From stateless interactions to contextual, memory-rich sessions.

This is where the agentic patterns I've written about converge. Multi-agent orchestration isn't just about routing tasks to specialised models. It's about agents that can act on local systems, remember previous sessions, coordinate across tools, and execute workflows end-to-end without requiring the user to be a manual bridge between reasoning and action.

The companies building chat wrappers around foundation models should be paying close attention. The market isn't asking for better conversations. It's asking for agents that get things done. And the moat for these tools isn't model quality. It's the data, integrations, and trust that accumulate through real system access.

What this means for product builders

If you're building AI-powered products, three lessons from this compressed lifecycle are worth internalising.

1. Close the execution loop. Every time your user has to copy output from your AI and paste it into another system, you've failed. Build the integration. Connect to the execution environment. Make the agent do the thing, not just describe the thing.

2. Invest in persistent context. Stateless interactions are a dead end for agent products. Your users shouldn't have to re-establish context every session. Build memory: local, private, accumulating. Make each interaction richer than the last. This is what turns a tool into a collaborator.

3. Don't defer the security work. If you're building agents with real system access (and you should be) the sandboxing, permission models, and security architecture need to be day-one infrastructure, not a later concern. The tool that defined this category succeeded in part because it took these problems seriously from the start. The ones that don't will produce spectacular, public failures that set the entire category back.

The speed of change will never wind back to what it was. Weekend builds becoming industry-defining tools is the new reality. And frankly, it's an extraordinary time to be a product builder.

Frequently Asked Questions

Is local-first architecture viable for enterprise deployment?

It's more than viable. For certain agent types, it's necessary. Enterprises that are uncomfortable sending proprietary code and data to cloud-hosted AI services are far more receptive to agents that run locally, process data on-device, and maintain context within the organisation's security perimeter. The tradeoff is deployment complexity, but for code-adjacent and data-sensitive use cases, local-first is the architecture enterprises actually want.

How do you balance agent autonomy with safety when it has real system access?

Layered permissions. Start with read-only access and expand based on context and user authorisation. Sandbox destructive operations. Require explicit approval for actions above a defined risk threshold. Log everything. The pattern mirrors how organisations manage human access to production systems: principle of least privilege, audit trails, and escalation for high-risk operations. The tooling for this is maturing rapidly.

Does this mean cloud-hosted AI chat products are dead?

No, but they're increasingly commoditised. Chat interfaces will continue to serve discovery, brainstorming, and open-ended exploration. But for productivity, for getting actual work done, the market is moving toward agents that integrate with local systems, maintain context, and execute actions. Products that stay in the chat-only lane will compete on model quality alone, which is a race to the bottom. Products that solve execution win on workflow integration, which is defensible.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience agentic AI at OpenChair.