AI Strategy Needs Hands-On Experience, Not Slide Decks

TL;DR

- AI strategies are failing because leaders are directing transformation without ever waiting for a token to generate

- The result is predictable: blown margins, impossible accuracy promises, and trust failures from missing guardrails

- Before you approve the next round of AI funding, use the tools until they break, because that's where strategy starts

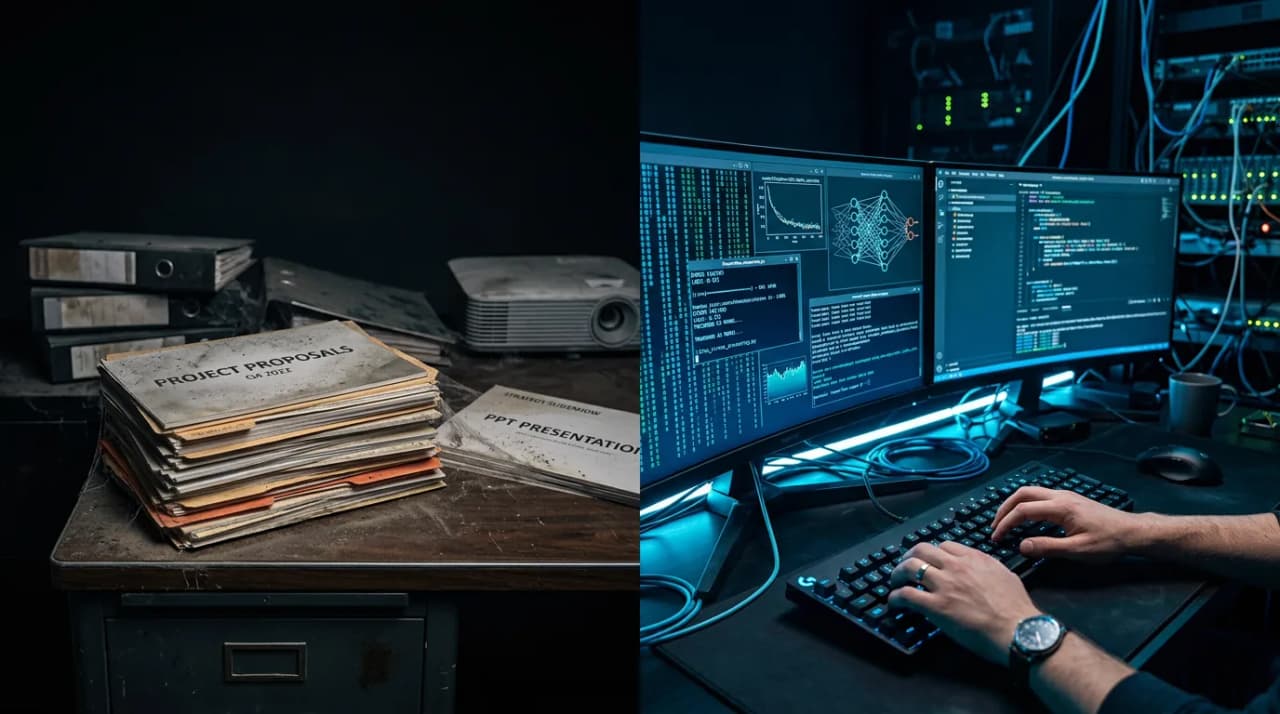

There is too much AI strategy happening in PowerPoint and not enough in the playground.

We are watching a wave of AI strategies fail across enterprises, and they aren't failing because of bad engineering. The models work. The infrastructure scales. The teams are talented.

They're failing because of a fundamental leadership gap.

What does it cost when leaders direct AI strategy without using the tools?

Leaders are demanding "AI transformation" without ever having waited for a token to generate. They haven't felt the latency. They haven't been frustrated by a hallucination. They haven't watched a carefully crafted prompt produce nonsense for one specific input that worked perfectly for every other input. They haven't seen an inference bill scale with usage and felt the pit in their stomach.

And this ignorance is expensive.

When you lead without understanding the materials, you build strategies on fantasy rather than physics. You set timelines based on vendor demos rather than engineering reality. You make promises to customers based on what the model can do in a controlled environment rather than what it does in production with messy, real-world data.

The result is three types of failure, all predictable, all preventable.

Financial failure

Executives treating inference costs like fixed server costs. They budget for AI the way they budget for cloud infrastructure, as a line item that scales predictably with usage. But inference economics don't work that way.

A model that costs $0.002 per call in a demo environment costs the same per call at scale, but the number of calls per user action is often dramatically higher than leadership assumed. A single "AI-powered" feature might require multiple inference calls: one to understand the query, one to retrieve context, one to generate the response, one to check for safety. The per-interaction cost can be 10x or 20x what the slide deck projected.

I've written about this in detail: the audit tax, the margin trap of bolting AI onto legacy platforms. Every one of those failure modes traces back to the same root cause: someone approved a budget without understanding what inference actually costs at production volume.

The fix isn't better spreadsheets. It's leaders who have personally watched a usage dashboard tick up and done the per-unit math in their heads. That visceral understanding of cost-per-interaction changes how you prioritise, what you promise, and how you price.

Product failure

Roadmaps that promise deterministic accuracy from a probabilistic engine. "The AI will correctly classify 100% of support tickets." "The agent will never hallucinate." "The system will handle every edge case."

These promises get made in rooms where no one present has ever tried to get a language model to do something consistently across thousands of varied inputs. Anyone who has built with these models knows the truth: they're astonishingly capable on average and maddeningly unreliable on the margins. The 95% Trap, where compounding accuracy across multi-step workflows produces system-level reliability far below what each step achieves, is invisible from a slide deck. It's obvious from a terminal.

Product roadmaps built by leaders who haven't experienced this reality promise things the technology cannot deliver. The engineering team knows the promises are impossible. They either push back (and get labelled as blockers) or stay quiet (and deliver something that falls short). Neither outcome is good.

The fix is leaders who have personally tried to get a model to do the thing they're putting on the roadmap. Not watched a demo. Tried it themselves. On real data. With edge cases. Until it broke. That experience calibrates expectations in a way that no briefing document can.

Trust failure

Rolling out "magic" features without human-in-the-loop guardrails, then watching customer trust evaporate the moment the model drifts.

This is the most damaging failure mode because trust, once lost, is extraordinarily expensive to rebuild. A customer who sees your AI feature confidently present wrong information doesn't think "the model had a bad day." They think "this company doesn't know what they're doing." And they tell other customers.

Leaders who've never experienced model drift (the slow degradation of output quality as the distribution of inputs changes, as the model version updates, as the prompts interact with data patterns they weren't designed for) don't build guardrails. They don't insist on human review for high-stakes outputs. They don't budget for monitoring. They don't plan for graceful degradation.

Because from the PowerPoint, the model just works. It's only in the playground that you discover the hundred ways it doesn't.

The literacy requirement

You cannot strategise for a capability you haven't experienced.

This isn't a controversial statement in any other domain. No one would put a leader in charge of a construction project who'd never visited a building site. No one would ask someone to lead a manufacturing transformation who'd never stood on a factory floor. The expectation of firsthand experience is obvious.

But with AI, we've created an exception. We've accepted that leaders can direct AI strategy based entirely on vendor presentations, analyst reports, and internal briefing decks. We've allowed a class of AI decision-makers who have never opened a playground, never written a prompt, never waited for a response and been surprised by what came back.

This has to change. Leaders don't need to become engineers, but AI has a property that makes it uniquely dangerous to direct from a distance: the failure modes are non-obvious. A hallucination doesn't throw an error. Latency spikes aren't visible in a quarterly review. Prompt drift happens slowly. Cost overruns compound quietly.

You only develop intuition for these things by using the technology. By sitting with it. By pushing it until it breaks and understanding why it broke.

The practical step

Before you approve the next round of AI funding, do the practical thing.

Cancel the slide review. Open the tools. Use them on a real problem, not a demo scenario. Try to get the model to do something your roadmap promises. Feed it messy data. Ask it ambiguous questions. Push it into edge cases.

Use it until it fails.

Note where it fails. Note how it fails. Note how confident it sounds while failing. Note how long the response takes. Note how the quality changes when you rephrase the same question. Note what happens when the input is slightly different from what you expected.

That experience, frustrating and humbling as it is, is worth more than every strategy deck your team has produced this year. It's where you develop the intuition that separates leaders who build AI strategies that work from leaders who build AI strategies that sound good in a board meeting and collapse in production. The handbook chapter on AI-first principles codifies this into a decision framework you can apply to your own roadmap.

Once you understand the limitations, you earn the right to lead the strategy. That's the builder-leader identity: strategy grounded in firsthand experience, not borrowed intuition.

Frequently Asked Questions

How much technical depth does a leader actually need?

Enough to know what questions to ask. You don't need to understand transformer architectures or write Python. You need to have used the tools enough to know that accuracy varies with input quality, that inference costs scale non-linearly, that "the AI will just handle it" is never a safe assumption, and that monitoring and evals are infrastructure requirements, not nice-to-haves. An afternoon in the playground teaches more than a week of briefings.

Isn't this what technical advisors and AI teams are for?

Yes, and leaders should rely on their teams' expertise. But there's a difference between trusting your team's recommendations and being unable to evaluate them. A leader who has firsthand experience with the technology can ask sharper questions, spot unrealistic promises, and make better tradeoff decisions. Delegation without understanding isn't leadership. It's hope.

What if the leader tries the tools and finds them underwhelming?

Good. That's the point. If the tools don't match the ambition on the roadmap, you've just saved months of engineering time and millions in budget by discovering the gap before committing. The gap might mean the roadmap needs to be adjusted, the use cases need to be narrowed, or the timeline needs to extend. All of those are better outcomes than discovering the gap after you've shipped and promised customers something you can't deliver.

Logan Lincoln

Product executive and AI builder based in Brisbane, Australia. Nine years in regulated B2B SaaS, currently shipping production AI platforms. Written from experience AI governance at Cotality.